By Tim Higgins and Ben Foldy

U.S. safety investigators leveled a blistering rebuke of the

federal regulator responsible for overseeing the safety of Tesla

Inc.'s advanced driver-assistance system called Autopilot, which

they found contributed to another fatal crash.

Tesla's Autopilot played a role in a crash that killed the

driver of the auto maker's Model X sport-utility vehicle in March

2018 in Mountain View, Calif., the National Transportation Safety

Board said Tuesday.

The agency, better known for its investigations into airplane

crashes, has been increasingly scrutinizing the emergence of

automated-driving technologies and pushing the National Highway

Traffic Safety Administration to do more to ensure the safety of

advanced driver-assistance systems.

"It's time to stop enabling drivers in any partially automated

vehicle to pretend that they have driverless cars because they

don't have driverless cars," NTSB Chairman Robert Sumwalt said

Tuesday. He urged NHTSA to "fulfill its oversight responsibility to

ensure that corrective action is taken when necessary."

The NTSB detailed its findings during a meeting in Washington,

setting the stage for increased pressure on NHTSA, which regulates

cars and has the power to force Tesla to make changes. Unlike

NHTSA, which can require action by car makers, the NTSB only issues

recommendations on how to improve safety.

The NTSB faulted the regulator's investigating arm for not

thoroughly assessing the effectiveness of Tesla's driver-monitoring

system, foreseeable misuse and risks of it being used in ways it

wasn't designed to handle. It urged further evaluation of the

system.

NHTSA will review the report and considers distracted driving to

be a continuing concern, an agency spokesman said in a statement.

Tesla didn't respond to a request for comment.

The NTSB also attributed probable cause of the 2018 crash to the

driver, Walter Huang, who was likely distracted playing a videogame

on his employer-issued cellphone. His SUV veered into a road

barrier at 71 miles an hour during his morning commute along

Highway 101.

The findings won't result in any penalties or other immediate

consequences.

The NTSB and NHTSA have differed on Tesla's Autopilot in the

past as the government tries to adjust to the fast-moving world of

increased automation in the automobile. Regulators are grappling

with how to find the right balance between encouraging potentially

lifesaving technology while ensuring the public is safe.

NHTSA has opened 14 investigations into Tesla crashes involving

driver-assistance systems as part of a broader review of the

technology. Two of those investigations include Tesla vehicles

involved in fatal incidents in the past two months.

To help shape future policies, NHTSA has been soliciting

feedback on new test procedures for the technologies. Congress has

also been considering potential legislation for autonomous

vehicles. At a Senate hearing in November, Tesla's Autopilot was

singled out for criticism by both Mr. Sumwalt and Massachusetts

Democratic Sen. Ed Markey, who repeatedly pressed NHTSA's acting

administrator James Owens about drivers using the system

unsafely.

In 2017, NHTSA found that Tesla's Autopilot contained no defect

during a fatal 2016 crash in Florida involving Joshua Brown. The

NTSB later concluded the auto maker contributed to the incident

with a technology that allowed the driver to go long periods

without his hands on the wheel and ignore the company's in-car

warnings.

In that incident, Mr. Brown had Autopilot engaged when his Tesla

Model S ran through the underside of a tractor trailer that was

crossing the road. Investigators found that Mr. Brown made no

attempt to stop and the car's data showed the system didn't detect

his hands touching the wheel in the seconds before the impact.

On Tuesday, the NTSB reiterated its findings in Mr. Brown's

crash and highlighted other Tesla crashes in addition to the

Mountain View incident that shared similarities. The incidents

showed prolonged inattentiveness by drivers and suggested that

Tesla's monitoring system was a poor measure of engagement.

Autopilot is the marketing name for a system of functions that

allow Tesla cars to steer, brake and cruise themselves under

certain circumstances. It doesn't amount to self-driving and the

company instructs drivers to keep their hands on the wheel and pay

attention to the roadway.

Following the 2016 Florida crash, Tesla made adjustments to the

system such as reducing the time a driver's hands can be off the

wheel before getting a warning and a three-strikes policy that

turns the system off if the driver continues to ignore the alerts.

Still, Tesla has continued to come under criticism from experts and

safety advocates who say drivers are improperly using

Autopilot.

Tesla Chief Executive Elon Musk has acknowledged that some

drivers are overly confident with Autopilot, but he has vigorously

defended the system, saying his company's data shows its vehicles

are safer than others.

General Motors Co. has deployed similar technology. But its

system includes a camera that monitors a driver's eye movement to

ensure that the driver is paying attention. Tesla has rejected that

kind of technology, saying it is ineffective.

The Mountain View crash heightened concern about automation, in

part because it came soon after a fatal crash in Tempe, Ariz.,

involving a test vehicle used by Uber Technologies Inc. to develop

autonomous vehicles. In the Uber crash, a safety operator sat at

the steering wheel with the job of taking control of the vehicle in

case of emergency. That didn't happen and the Uber vehicle struck

and killed a pedestrian.

The NTSB, in November, found that the immediate cause of the

Uber collision was the failure of the safety operator to closely

monitor the car's driving system and the roadway. The driver

instead was looking at a personal cellphone. The board also faulted

Uber for a lack of a system to address safety operators'

"automation complacency."

Moments after the NTSB concluded its meeting Tuesday, NHTSA said

it took action to suspend passenger operation of 16 autonomous

shuttles operated by EasyMile after one of its passengers was

injured in an unexplained braking incident. The low-speed shuttles

operate in 10 states with safety operators onboard.

The incident occurred in Columbus, Ohio, when a company shuttle

traveling about 7 miles per hour made an emergency stop and a

passenger fell from a seat, an EasyMile spokeswoman said. "We are

running test loops on the ground for further analysis."

Following the Mountain View crash, Tesla attempted to lay the

blame for the crash on the driver of the Model X, saying the

vehicle's data didn't detect Mr. Huang's hands on the wheel in the

moments before the crash. Others have noted that the finicky system

may not have detected his hands.

"The crash happened on a clear day with several hundred feet of

visibility ahead, which means that the only way for this accident

to have occurred is if Mr. Huang wasn't paying attention to the

road, despite the car providing multiple warnings to do so," a

Tesla spokesman said at the time in a statement.

Mr. Huang's family is suing Tesla over the crash. Their lawyer

told the NTSB that Mr. Huang had complained to his family that

Autopilot was acting up in the section of highway where his car

veered into a center divider.

Autopilot's limitations steered the vehicle into the divider and

Tesla's "ineffective" monitoring of Mr. Huang's use of Autopilot

contributed to the crash, the NTSB found.

Also on Tuesday, the NTSB said California's lack of maintenance

of the median contributed to the severity of the crash, saying Mr.

Huang would likely have survived otherwise. A different crash in

the same spot earlier in the month had previously damaged the

barrier.

Write to Tim Higgins at Tim.Higgins@WSJ.com and Ben Foldy at

Ben.Foldy@wsj.com

(END) Dow Jones Newswires

February 25, 2020 18:35 ET (23:35 GMT)

Copyright (c) 2020 Dow Jones & Company, Inc.

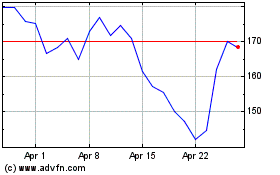

Tesla (NASDAQ:TSLA)

Historical Stock Chart

From Aug 2024 to Sep 2024

Tesla (NASDAQ:TSLA)

Historical Stock Chart

From Sep 2023 to Sep 2024