Company says new policies address concerns, but activists seek

greater efforts

By Sarah E. Needleman and Jeff Horwitz

This article is being republished as part of our daily

reproduction of WSJ.com articles that also appeared in the U.S.

print edition of The Wall Street Journal (July 10, 2020).

Civil-rights advocates are increasing pressure on Facebook Inc.

advertisers to halt spending on the company's platforms, saying it

has done too little to police hateful and other problematic

content.

Groups including the Anti-Defamation League and the NAACP have

enlisted hundreds of companies in the boycott campaign, while

lawyers Facebook hired to audit its handling of civil-rights issues

issued a report this week saying the company made progress on

several fronts but has more work to do.

Facebook has acknowledged its shortcomings and pointed to new

policies, additional spending and other efforts aimed at trying to

address concerns.

Here is a look at what Facebook is doing -- and not doing -- in

response.

What specific demands are civil-rights groups making of

Facebook?

Stop Hate for Profit, the name of the coalition behind the

boycott effort, has on its website 10 recommendations for Facebook,

ranging from the specific to the sweeping.

The group says Facebook should hire an executive with

civil-rights expertise for a post in its C-suite, refund

advertisers whose ads were shown next to content later removed for

violating Facebook's terms of service and submit to regular

independent audits of identity-based hate and misinformation on its

platform.

On Wednesday, Facebook said it plans to hire a vice president

for civil rights, a role that a spokeswoman described as "very

senior" and is intended to lead a team built out over time. She

also said the company issues refunds to advertisers in some

instances.

The boycott groups credit Facebook with some progress on those

points but say it is insufficient. The coalition wants Facebook to

adopt "common-sense changes" to its policies to reduce hate on the

platform and remove content that could inspire people to commit

violence; stop amplifying all groups associated with hate,

misinformation or conspiracies; and remove groups focused on "white

supremacy, militia, anti-Semitism, violent conspiracies, Holocaust

denialism, vaccine misinformation and climate denialism."

How does Facebook define hate speech?

Facebook's community standards define hate speech as attacks on

people based on about a dozen "protected characteristics" including

race, religious affiliation, national origin and gender identity.

Attacks include violent or dehumanizing speech, statements of

inferiority or calls for exclusion or segregation, it says.

Facebook has broadened its definition of hate speech and who is

protected over time. Last month, it said it would stop allowing ads

alleging that a particular race, religion or identity group posed a

threat to others. Such content is still allowed in unpaid

posts.

Civil-rights advocates say the rules remain too narrow, allowing

white supremacists to dodge crackdowns by avoiding certain keywords

that would surface in searches for hate speech. The Anti-Defamation

League last month listed several examples of hateful or extremist

posts on Facebook that still appear near major companies' ads on

the platform.

One example is of an ad from home-sharing company Airbnb Inc.

that the League said appeared next to a post from an antigovernment

militia movement called the Three Percenters, about the recent

decision by PepsiCo Inc.'s Quaker Oats brand to retire its Aunt

Jemima branding. In another, the League said an ad from

human-resources company Randstad Holding NV appeared next to a

video from a large Facebook group called Q-Anon Patriots that

accused the media of promoting cannibalism by attempting to draw a

connection between a sculpture owned by former lobbyist Tony

Podesta and deceased serial killer Jeffrey Dahmer.

The Facebook spokeswoman said it takes aggressive action against

groups and people who promote hate. The company last month said it

banned hundreds of accounts deemed to have links to white

supremacist organizations or groups that promote violence.

How does Facebook handle speech by political figures compared

with everyday users?

Some of the biggest recent battles over content have involved

President Trump's social-media posts.

When he wrote "when the looting starts, the shooting starts" in

response to protests and unrest after the police killing of George

Floyd, Twitter Inc. labeled the post as violating its policies on

glorifying violence; Facebook left the same comments untouched.

Mr. Trump responded that he wasn't urging police to shoot

protesters, adding that "nobody should have any problem with this

other than the haters, and those looking to cause trouble on social

media."

Facebook has said it doesn't fact-check political speech from

politicians because it doesn't believe private companies should be

arbiters of what is true and what isn't.

The boycott coalition says Facebook should eliminate its

exemption for politicians.

Last month CEO Mark Zuckerberg said Facebook would start

labeling posts by any user that violate content rules but are

deemed newsworthy. For example, the company determined that posts

showing a 1972 war photo of a naked Vietnamese girl fleeing napalm

bombs was newsworthy and could remain on the platform, after

Facebook initially took down that content under restrictions on

child nudity.

Mr. Zuckerberg also said Facebook would add safeguards to

prevent content that promotes voter suppression and ads that depict

immigrants or asylum seekers as inferior. Facebook would have to

decide whether nonadvertising content that is reported as abusive

toward immigrants should be removed.

What tools does Facebook use to moderate content on its

platform?

Facebook says it has more than 35,000 people working on safety

and security, including more than 350 employees with expertise in

law enforcement, national security, counterterrorism intelligence

and academic studies in radicalization. Facebook also uses

artificial intelligence to detect prohibited content, and says it

removes most of that content before there is a user report. The

company says it blocks millions of fake accounts from being created

every day.

The amount of effort Facebook puts into detecting violations is

a point of contention. The civil-rights groups say the company has

enormous financial resources but still routinely fails to catch

toxic content posted within group pages and elsewhere. They say all

individuals facing severe hate and harassment should be able to

connect with a live Facebook employee.

The Facebook spokeswoman said users can moderate comments on

their posts, block other users and control their posts' visibility

by creating a restricted list. She also said the company made

changes allowing groups to be removed if an administrator

encourages or creates posts that violate platform rules.

To help address where lines should be drawn on hate speech,

Facebook has created an independent content governance board that

includes human-rights lawyers and free-speech advocates. The group

likely will be fully operational late this year.

What is Facebook's 'transparency' report?

The report created by Facebook shows how the company enforces

its community standards and content restrictions, responds to data

requests and protects intellectual property. The company's most

recent transparency report, released in May, says Facebook removed

9.6 million pieces of content with hate speech in the first

quarter, up from 5.7 million in the fourth quarter of 2019.

The civil-rights groups say they don't trust Facebook to check

its own work. They say its report doesn't address hate speech that

isn't reported or whether it dismisses concerns about content that

is reported as abusive but isn't removed.

"A 'transparency report' is only as good as its author is

independent," the Stop Hate for Profit website says.

The Facebook spokeswoman said the company has committed to

providing more insight into how it enforces its hate-speech

policies in the coming year.

Does Facebook remove whole groups from its platform?

Facebook has struggled to articulate exactly when a private

group becomes a threat to other users, but it is a sliding scale.

Groups that Facebook considers spreaders of misinformation or

vitriolic content can be removed from its algorithmic

recommendation systems. Permanent removal is a possibility for

entities deemed a threat to others, but Mr. Zuckerberg has voiced

reluctance to entirely prevent users from forming communities

around subjects they care about.

Facebook also removes groups and pages it identifies as part of

coordinated inauthentic behavior.

Who is ultimately in charge of what types of content are allowed

on the company's social-media platforms?

Mr. Zuckerberg has final say on content policy matters. The CEO

guided Facebook's handling of the president's social-media posts --

a focus of its dispute with civil-rights groups.

Mr. Zuckerberg has argued for free expression online, saying in

a speech at Georgetown University in October that Facebook

shouldn't be the ultimate arbiter of truth. "In a democracy, I

believe people should decide what is credible, not tech companies,"

he said. Mr. Zuckerberg also said creating rules that prohibit free

speech can have unintended consequences.

The Facebook spokeswoman said that the company makes tens of

thousands of decisions about content daily and that the process

depends on its roughly 15,000 content reviewers world-wide. In some

cases, content decisions are escalated to people on different

teams, and they are handled routinely by on-call content policy

specialists around the world, with senior leadership weighing in on

a small number of decisions, she said.

Write to Sarah E. Needleman at sarah.needleman@wsj.com and Jeff

Horwitz at Jeff.Horwitz@wsj.com

(END) Dow Jones Newswires

July 10, 2020 02:47 ET (06:47 GMT)

Copyright (c) 2020 Dow Jones & Company, Inc.

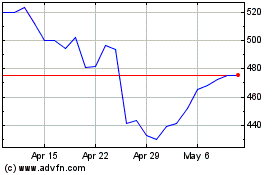

Meta Platforms (NASDAQ:META)

Historical Stock Chart

From Aug 2024 to Sep 2024

Meta Platforms (NASDAQ:META)

Historical Stock Chart

From Sep 2023 to Sep 2024