By Michael Totty

As artificial intelligence spreads into more areas of public and

private life, one thing has become abundantly clear: It can be just

as biased as we are.

AI systems have been shown to be less accurate at identifying

the faces of dark-skinned women, to give women lower credit-card

limits than their husbands, and to be more likely to incorrectly

predict that Black defendants will commit future crimes than

whites. Racial and gender bias has been found in job-search ads,

software for predicting health risks and searches for images of

CEOs.

How could this be? How could software designed to take the bias

out of decision making, to be as objective as possible, produce

these kinds of outcomes? After all, the purpose of artificial

intelligence is to take millions of pieces of data and from them

make predictions that are as error-free as possible.

But as AI has become more pervasive -- as companies and

government agencies use AI to decide who gets loans, who needs more

health care and how to deploy police officers, and more --

investigators have discovered that focusing just on making the

final predictions as error free as possible can mean that its

errors aren't always distributed equally. Instead, its predictions

can often reflect and exaggerate the effects of past discrimination

and prejudice.

In other words, the more AI focused on getting only the big

picture right, the more it was prone to being less accurate when it

came to certain segments of the population -- in particular women

and minorities. And the impact of this bias can be devastating on

swaths of the population -- for instance, denying loans to

creditworthy women much more frequently than denying loans to

creditworthy men.

In response, the AI world is making a strong push to root out

this bias. Academic researchers have devised techniques for

identifying when AI makes unfair judgments, and the designers of AI

systems are trying to improve their models to deliver

more-equitable results. Large tech companies have introduced tools

to identify and remove bias as part of their AI offerings.

As the tech industry tries to make AI fairer, though, it faces

some significant obstacles. For one, there is little agreement

about what "fairness" exactly looks like. Do we want an algorithm

that makes loans without regard to race or gender? Or one that

approves loans equally for men and women, or whites and blacks? Or

one that takes some different approach to fairness?

What's more, making AI fairer can sometimes make it less

accurate. Skeptics might argue that this means the predictions,

however biased, are the correct ones. But in fact, the algorithm is

already making wrong decisions about disadvantaged groups. Reducing

those errors -- and the unfair bias -- can mean accepting a certain

loss of overall statistical accuracy. So the argument ends up being

a question of balance.

In AI as in the rest of life, less-biased results for one group

might look less fair for another.

"Algorithmic fairness just raises a lot of these really

fundamental thorny justice and fairness questions that as a society

we haven't really quite figured out how to think about," says Alice

Xiang, head of fairness, transparency and accountability research

at the Partnership on AI, a nonprofit that researches and advances

responsible uses of AI.

Here's a closer look at the work being done to reduce bias in AI

-- and why it's so hard.

Identifying bias

Before bias can be rooted out of AI algorithms, it first has to

be found. International Business Machines Corp.'s AI Fairness 360

and the What-if tool from Alphabet Inc.'s Google are some of the

many open-source packages that companies, researchers and the

public can use to audit their models for biased results.

One of the newest offerings is the LinkedIn Fairness Toolkit, or

LiFT, introduced in August by Microsoft Corp.'s professional

social-network unit. The software tests for biases in the data used

to train the AI, the model and its performance once deployed.

Fixing the data

Once bias is identified, the next step is removing or reducing

it. And the place to start is the data used to develop and train

the AI model. "This is the biggest culprit," says James Manyika, a

McKinsey senior partner and chairman of the McKinsey Global

Institute.

There are several ways that problems with data can introduce

bias. Certain groups can be underrepresented, so that predictions

for that group are less accurate. For instance, in order for a

facial-recognition system to identify a "face," it needs to be

trained on a lot of photos to learn what to look for. If the

training data contains mostly faces of white men and few Blacks, a

Black woman re-entering the country might not get an accurate match

in the passport database, or a Black man could be inaccurately

matched with photos in a criminal database. A system designed to

distinguish the faces of pedestrians for an autonomous vehicle

might not even "see" a dark-skinned face at all.

Gender Shades, a 2018 study of three commercial

facial-recognition systems, found they were much more likely to

fail to recognize the faces of darker-skinned women than

lighter-skinned men. IBM's Watson Visual Recognition performed the

worst, with a nearly 35% error rate for dark-skinned women compared

with less than 1% for light-skinned males. One reason was that

databases used to test the accuracy of facial-recognition systems

were unrepresentative; one common benchmark contained more than 77%

male faces and nearly 84% white ones, according to the study,

conducted by Joy Buolamwini, a researcher at the MIT Media Lab, and

Timnit Gebru, currently a senior research scientist at Google.

Most researchers agree that the best way to tackle this problem

is with bigger and more representative training sets. Apple Inc.,

for instance, was able to develop a more accurate

facial-recognition system for its Face ID, used to unlock iPhones,

in part by training it on a data set of more than two billion

faces, a spokeswoman says.

Shortly after the Gender Shades paper, IBM released an updated

version of its visual-recognition system using broader data sets

for training and an improved ability to recognize images. The

updated system reduced error rates by 50%, although it was still

far less accurate for darker-skinned females than for light-skinned

males.

Since then, several large tech companies have decided facial

recognition carries too many risks to support -- no matter how low

the error rate. IBM in June said it no longer intends to offer

general-purpose facial-recognition software. The company was

concerned about the technology's use by governments and police for

mass surveillance and racial profiling.

"Even if there was less bias, [the technology] has

ramifications, it has an impact on somebody's life," says Ruchir

Puri, chief scientist of IBM Research. "For us, that is more

important than saying the technology is 95% accurate."

Reworking algorithms

When the training data isn't accessible or can't be changed,

other techniques can be used to change machine-learning algorithms

so that results are fairer.

One way that bias enters into AI models is that in their quest

for accuracy, the models can base their results on factors that can

effectively serve as proxies for race or gender even if they aren't

explicitly labeled in the training data.

It's well known, for instance, that in credit scoring, ZIP Codes

can serve as a proxy for race. AI, which uses millions of

correlations in making its predictions, can often base decisions on

all sorts of hidden relationships in the data. If those

correlations lead to even a 0.1% improvement in predictive

accuracy, it will then use an inferred race in its risk

predictions, and won't be "race blind."

Because past discriminatory lending practices often unfairly

denied loans to creditworthy minority and women borrowers, some

lenders are turning to AI to help them to expand loans to those

groups without significantly increasing default risk. But first the

effects of the past bias have to be stripped from the

algorithms.

IBM's Watson OpenScale, a tool for managing AI systems, uses a

variety of techniques for lenders and others to correct their

models so they don't produce biased results.

One of the early users of OpenScale was a lender that wanted to

make sure that its credit-risk model didn't unfairly deny loans to

women. The model was trained on 50 years of historical lending data

which, reflecting historical biases, meant that women were more

likely than men to be considered credit risks even though they

weren't.

Using a technique called counterfactual modeling, the bank could

flip the gender associated with possibly biased variables from

"female" to "male" and leave all the others unchanged. If that

changed the prediction from "risk" to "no risk," the bank could

adjust the importance of the variables or simply ignore them to

make an unbiased loan decision. In other words, the bank could

change how the model viewed that biased data, much like glasses can

correct nearsightedness.

If flipping gender doesn't change the prediction, the variable

-- insufficient income, perhaps -- is probably a fair measure of

loan risk, even though it might also reflect more deeply entrenched

societal biases, like lower pay for women.

"You're debiasing the model by changing its perspective on the

data," says Seth Dobrin, vice president of data and AI and chief

data officer of IBM Cloud and Cognitive Software. "We're not fixing

the underlying data. We're tuning the model."

Zest AI, a Los Angeles-based company that provides AI software

for lenders, uses a technique called adversarial debiasing to

mitigate biases from its credit models. It pits a model trained on

historical loan data against an algorithm trained to look for bias,

forcing the original model to reduce or adjust the factors that

lead to biased results.

For example, people with shorter credit histories are

statistically more likely to default, but credit history can often

be a proxy for race -- unfairly reflecting the difficulties Blacks

and Hispanics have historically faced in getting loans. So, without

a long credit history, people of color are more likely to be denied

loans, whether they're likely to repay or not.

The standard approach for such a factor might be to remove it

from the calculation, but that can significantly hurt the accuracy

of the prediction.

Zest's fairness model doesn't eliminate credit history as a

factor; instead it will automatically reduce its significance in

the credit model, offsetting it with the hundreds of other credit

factors.

The result is a lending model that has two goals -- to make its

best prediction of credit risk but with the restriction that the

outcome is fairer across racial groups. "It's moving from a single

objective to a dual objective," says Sean Kamkar, Zest's head of

data science.

Some accuracy is sacrificed in the process. In one test, an auto

lender saw a 4% increase in loan approvals for Black borrowers,

while the model showed a 0.2% decline in accuracy, in terms of

likeliness to repay. "It's staggering how cheap that trade-off is,"

Mr. Kamkar says.

Over time, AI experts say, the models will become more accurate

without the adjustments, as data from new successful loans to women

and minorities get incorporated in future algorithms.

Adjusting results

When the data or the model can't be fixed, there are ways to

make predictions less biased.

LinkedIn's Recruiter tool is used by hiring managers to identify

potential job candidates by scouring through millions of LinkedIn

profiles. Results of a search are scored and ranked based on the

sought-for qualifications of experience, location and other

factors.

But the rankings can reflect longstanding racial and gender

discrimination. Women are underrepresented in science, technical

and engineering jobs, and as a result might show up far down in the

rankings of a traditional candidate search, so that an HR manager

might have to scroll through page after page of results before

seeing the first qualified women candidates.

In 2018, LinkedIn revised the Recruiter tool to ensure that

search results on each page reflect the gender mix of the entire

pool of qualified candidates, and don't penalize women for low

representation in the field. For example, LinkedIn posted a recent

job search for a senior AI software engineer that turned up more

than 500 candidates across the U.S. Because 15% of them were women,

four women appeared in the first page of 25 results.

"Seeing women appear in the first few pages can be crucial to

hiring female talent," says Megan Crane, the LinkedIn technical

recruiter performing the search. "If they were a few pages back

without this AI to bring them to the top, you might not see them or

might not see as many of them."

Other tools give users the ability to arrange the output of AI

models to suit their own needs.

Pinterest Inc.'s search engine is widely used for people hunting

for style and beauty ideas, but until recently users complained

that it was frequently difficult to find beauty ideas for specific

skin colors. A search for "eye shadow" might require adding other

keywords, such as "dark skin," to see images that didn't depict

only whites. "People shouldn't have to work extra hard by adding

additional search terms to feel represented," says Nadia Fawaz,

Pinterest's technical lead for inclusive AI.

Improving the search results required labeling a more diverse

set of image data and training the model to distinguish skin tones

in the images. The software engineers then added a search feature

that lets users refine their results by skin tones ranging from

light beige to dark brown.

When searchers select one of 16 skin tones in four different

palettes, results are updated to show only faces within the desired

range.

After an improved version was released this summer, Pinterest

says, the model is three times as likely to correctly identify

multiple skin tones in search results.

Struggling with pervasive issues

Despite the progress, some problems of AI bias resist

technological fixes.

For instance, just as groups can be underrepresented in training

data, they can also be overrepresented. This, critics say, is a

problem with many criminal-justice AI systems, such as "predictive

policing" programs used to anticipate where criminal activity might

occur and prevent crime by deploying police resources to patrol

those areas.

Blacks are frequently overrepresented in the arrest data used in

these programs, the critics say, because of discriminatory policing

practices. Because Blacks are more likely to be arrested than

whites, that can reinforce existing biases in law enforcement by

increasing patrols in predominantly Black neighborhoods, leading to

more arrests and runaway feedback loops.

"If your data contains that sort of human bias already, we

shouldn't expect an algorithm to somehow magically eradicate that

bias in the models that it builds," says Michael Kearns, a

professor of computer and information science at the University of

Pennsylvania and the co-author of "The Ethical Algorithm."

(It may be possible to rely on different data. PredPol Inc., a

Santa Cruz, Calif., maker of predictive-policing systems, bases its

risk assessments on reports by crime victims, not on arrests or on

crimes like drug busts or gang activity. Arrests, says Brian

MacDonald, PredPol's chief executive, are poor predictors of actual

criminal activity, and with them "there's always the possibility of

bias, whether conscious or subconscious.")

Then there's the lack of agreement about what is fair and

unbiased. To many, fairness means ignoring race or gender and

treating everyone the same. Others argue that extra protections --

such as affirmative action in university admissions -- are needed

to overcome centuries of systemic racism and sexism.

In the AI world, scientists have identified many different ways

to define and measure fairness, and AI can't be made fair on all of

them. For example, a "group unaware" model would satisfy those who

believe it should be blind to race or gender, while an

equal-opportunity model might require taking those characteristics

into account to produce a fair outcome. Some proposed changes could

be legally questionable.

Meanwhile, some people question how much we should be relying on

AI to make critical decisions in the first place. In fact, many of

the fixes require having a human in the loop to ultimately make a

decision about what's fair. Pinterest, for instance, relied on a

diverse group of designers to evaluate the performance of its

skin-tone search tool.

Many technologists remain optimistic that AI could be less

biased than its makers. They say that AI, if done correctly, can

replace the racist judge or sexist hiring manager, treat everyone

equitably and make decisions that don't unfairly discriminate.

"Even when AI is impacting our civil liberties, the fact is that

it's actually better than people," says Ayanna Howard, a roboticist

and chair of the School of Interactive Computing at the Georgia

Institute of Technology.

AI "can even get better and better and better and be less

biased," she says. "But we also have to make sure that we have our

freedom to also question its output if we do think it's wrong."

Mr. Totty is a writer in San Francisco. He can be reached at

reports@wsj.com.

(END) Dow Jones Newswires

November 03, 2020 10:15 ET (15:15 GMT)

Copyright (c) 2020 Dow Jones & Company, Inc.

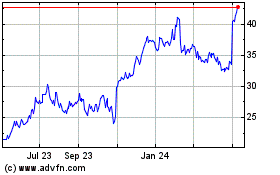

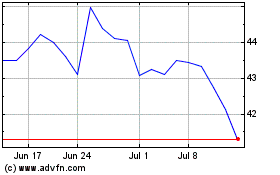

Pinterest (NYSE:PINS)

Historical Stock Chart

From Mar 2024 to Apr 2024

Pinterest (NYSE:PINS)

Historical Stock Chart

From Apr 2023 to Apr 2024