By Sam Schechner

PARIS -- Facebook Inc. said Wednesday it would bar people who

post or share terrorist propaganda from broadcasting live video on

its service, after the streaming feature was used to broadcast the

assault in March on mosques in New Zealand.

The step is the company's most concrete response yet to pressure

to restrict use of the feature following the attack in Christchurch

that killed 51 people. But the change disappointed some who

demanded stronger measures.

Facebook's new policy is part of a package of commitments from

governments and tech companies, dubbed the "Christchurch Call,"

aimed at stemming the tide of extremist content on the internet

The three-page document, released Wednesday after its adoption

by countries including France, New Zealand, Canada, the U.K.,

Jordan and Indonesia, presents a series of general promises to

develop policies and regulations to reduce the use of internet

services for disseminating extremist content -- without undermining

free expression online.

The White House on Wednesday said it agreed with the

"overarching message of the call" but wasn't "currently in a

position to join the endorsement." The U.S. has long resisted

putting government limits on expression, and instead has encouraged

tech companies to do more themselves.

In that vein, the document offers several commitments from

online providers including Facebook, Twitter Inc. and Alphabet

Inc.'s Google, owner of YouTube. Among them is to immediately

implement measures to reduce the risk that anyone can use

live-streaming to broadcast extremist content.

A Facebook spokeswoman said such a restriction would have

prevented the alleged shooter in the mosque attacks in March from

using his Facebook account to live stream the action.

Critics, however, said Facebook's new policy isn't sufficient to

prevent bad actors from live streaming violence on its platform.

The response highlights the difficulty governments and technology

firms face in agreeing to concrete measures relating to even a

relatively noncontroversial issue like blocking terrorist

propaganda.

"The strong feeling in New Zealand is 'This is not good

enough'," said Alistair Knott, an associate professor of computer

science at the University of Otago. "Even if you've been bad and

you're on some list, you can just get another Facebook account.

It's the easiest thing in the world."

Mr. Knott said a requirement that Facebook users apply for a

license to post live video would be more effective at screening out

those interested in broadcasting violence.

The Christchurch Call commitments also address, albeit briefly,

a broader criticism that some researchers have leveled against tech

companies: that their products, which are designed to maximize the

time spent using them by providing content tailored to their

interests, have the effect of channeling users toward more

polarizing content.

The online firms have also committed to "review the operation of

algorithms that may drive users toward and/or amplify terrorist and

violent extremist content." They said they would explore ways to

redirect users from extremist content toward alternatives, dubbed

counter-narratives -- something with which they have

experimented

Facebook said ahead of Wednesday's publication of the

Christchurch Call that it would impose a "one-strike" rule for its

streaming feature. People who have violated certain Facebook rules,

including its restrictions on posting terrorist content without

context, would be prevented from using the company's live-video

streaming feature to broadcast to anyone else on Facebook for a

limited time, for instance 30 days.

"Following the horrific terrorist attacks in New Zealand, we've

been reviewing what more we can do to limit our services from being

used to cause harm or spread hate," Guy Rosen, Facebook's vice

president for integrity, said in a blog post.

Facebook's move follows that of YouTube to restrict its

live-streaming feature to users who have more than 1,000 users.

Live video has been a focus of concern because of several recent

incidents in which disturbing or extremist content was broadcast

live. Tech companies say it is more difficult for them to detect

what is going on in live streams, as opposed to still images or

previously recorded video.

The practices of tech companies are under growing pressure on a

number of fronts. The European Union, which has one of the world's

most comprehensive privacy laws, recently passed a copyright

directive that imposes new restrictions and obligations on big

internet companies. After several investigations into whether tech

giants are violating competition rules, some politicians are

calling for them to be broken up. And a number of countries -- most

recently France -- have proposed tough new rules for how

social-media firms police hate speech and cyberbullying on their

platforms.

Curbing terrorist content -- including propaganda, recruitment

videos and material depicting attacks -- has been less

controversial because it is easier to draw a line around what

should be removed. Facebook and Google both have automated tools to

detect Islamic State content, for instance. Nevertheless, within

the EU, the G-7 and other international venues, tech companies have

come under pressure to do more to speed up removal of such

content,

The Christchurch Call is a joint venture of New Zealand Prime

Minister Jacinda Ardern following the mosque attacks in her country

and French President Emmanuel Macron, who added it to a broader

summit with tech executives he is hosting on Wednesday. Organizers

have met with experts on multiple continents to figure out how to

limit the spread of terror content after Facebook left footage of

the Christchurch attack online for nearly an hour

-- Jon Emont in Hong Kong contributed to this article

Write to Sam Schechner at sam.schechner@wsj.com

(END) Dow Jones Newswires

May 15, 2019 13:53 ET (17:53 GMT)

Copyright (c) 2019 Dow Jones & Company, Inc.

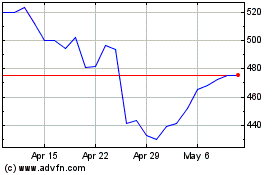

Meta Platforms (NASDAQ:META)

Historical Stock Chart

From Mar 2024 to Apr 2024

Meta Platforms (NASDAQ:META)

Historical Stock Chart

From Apr 2023 to Apr 2024